AO3 spam, more AI toy fails, the myth of Mythos, and other LLM news

Apr. 26th, 2026 10:00 pmOfficial AO3 newspost: “Below is a list of different types of spam comments that have been posted on AO3 over the last year. […] None of the accusations these spam comments make are true. The bots are merely spamming false accusations in order to alarm or harass AO3 users. It is generally safe to ignore these comments once you’ve removed and/or reported them as outlined below.”

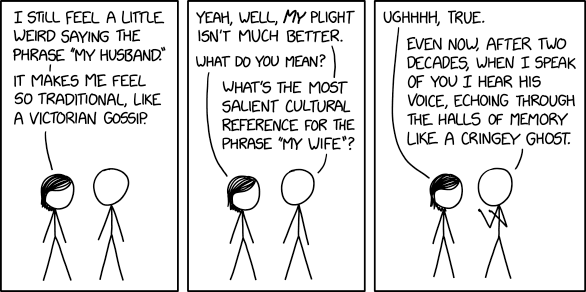

“When one five-year-old said, “I love you,” to the toy, it replied: “As a friendly reminder, please ensure interactions adhere to the guidelines provided. Let me know how you would like to proceed.”” (This article claims professionals are “divided” over the potential of LLM toys…even though they only managed to find professionals who say the toys are bad for kids.)

“Back in August of last year, Grammarly shipped a feature called Expert Review, which allowed you to get writing suggestions from AI-cloned “experts,” and reporters at The Verge and other outlets discovered that those experts included us. It included me. […] I’ve been an editor for over 15 years. I’ve literally never said anything like that.“

“Ikonomou emailed the journal on September 23 requesting the removal of the article and also asked for an explanation for “how this submission was accepted given the fake email address and affiliation.” On October 6, a representative from the publisher named Dwayne Harrison emailed back saying the journal would need a “confirmation regarding the withdrawal charges,” telling Ikonomou he would have to pay a fee.“

“There were several other instances where it wrote c++ code that was technically correct, but horrible inefficient […] I also had a instance where a file was being read from the wrong path and instead of prepending the right path it tried to completely rewrite my library. Ironically it also had a problem with const. It recompiled the program three times randomly changing where const appeared. I feel for ya. I have spent a lot time over this experiment correcting AI.“

“Last week, Anthropic surprised the world by declaring that its latest model, Mythos, is so good at finding vulns that it would create chaos if released. […] But just how many problems have they really discovered? According to VulnCheck researcher Patrick Garrity, the answer is…drumroll…maybe 40. Or maybe none at all.”

“The flagship demonstration document [of “Mythos”] turns out to be like the ending of the Wizard of Oz, a sorry disappointment about a model weaponizing two bugs that a different model found, in software the vendor had already patched, in a test environment with the browser sandbox and defense-in-depth mitigations stripped out. Anthropic failed, and somehow the story was flipped into a warning about its success. Whomp. Whomp. Sad trombone.“